I don't know about you but for me, every single morning starts the same way now. I open Twitter (I still refuse to call it X), and within three scrolls I've been told that OpenAI just killed five more startups, Anthropic made the stock market lose a few billion dollars, and that my job as a software engineer is officially over. Especially since the beginning of 2026. swyx even made a whole website tracking the chaos: wtfhappened2025.com. And honestly? Fair enough. A lot has happened.

But here's the thing. People have been saying some version of "AI will replace all jobs" for a few years now. Every year it's the same story with a slightly better demo attached to it. And the primary narrative, the one where everyone is unemployed and robots run the world? It hasn't actually happened. Not even close. So why haven't AI taken over my job yet?

In my recent post on the AntStack blog, I wrote about how we shipped features and products with 100% AI-generated code. And not in a "look how cool this is" way. More in a "here's what actually happened and it's complicated" way. That post covers the nuances of what "all AI-generated code" actually means in practice, so here's my take on the rest of the panic.

Software engineering jobs are obsolete

Let's get the loudest take out of the way first.

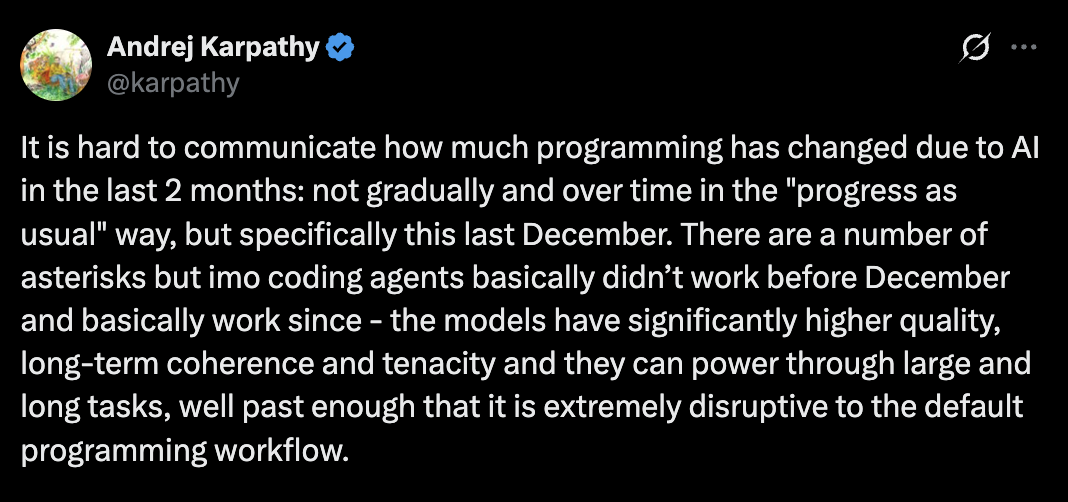

Yes, coding has become code generation now. You describe what you want, an AI agent writes it, you review it. That part is real. Andrej Karpathy wrote a whole thread about how programming fundamentally changed in the last couple of months. Not gradually over time, but specifically and dramatically.

And if you look at what's actually happening, the story is more nuanced than "everyone is getting fired." A lot of the layoffs you've been seeing are companies using AI as a convenient excuse to restructure and cut costs they were already planning to cut. Mandate AI adoption, call it an "efficiency push," and suddenly mass layoffs look like innovation instead of cost-cutting. A Harvard Business Review study even found that AI is intensifying workloads instead of reducing them, sparking what they call "AI burnout."

But here's where the "engineers are obsolete" crowd completely loses me. Writing code was never the hard part of software engineering. Understanding the problem, making architectural decisions, choosing between three valid implementations when all of them look fine, debugging something that only breaks in production on Tuesdays when there's a full moon. That's the job. The typing-code-into-a-file part? That was always the smallest slice of the work.

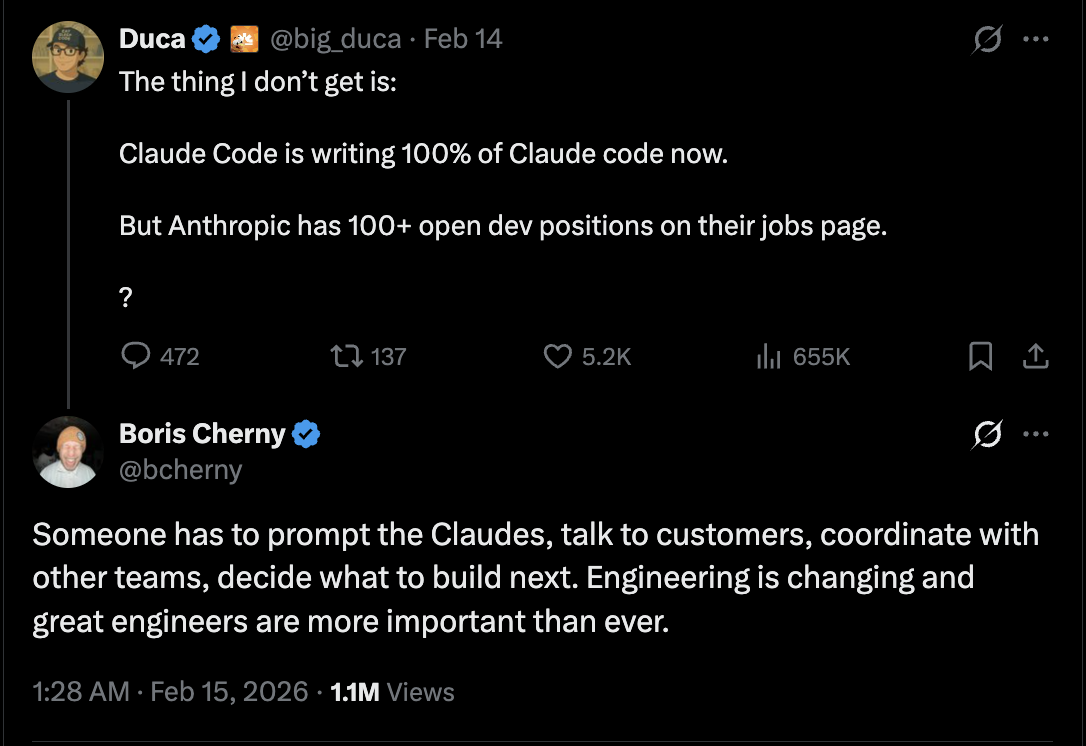

Boris Cherny, who created Claude Code, put it well. Someone still has to prompt the Claudes, talk to customers, coordinate with other teams, and decide what to build next. Even with the claim that the Claude Code team ships 100% AI-generated code, that doesn't mean someone who doesn't understand software could just join and ship at the same quality. You still need to know what you're building and why. And as someone pointed out from Dario's podcast with Dwarkesh, "90% of code written by AI" and "90% less demand for software engineers" are not the same statement. (Not even close.)

Boris Cherny, who created Claude Code, put it well. Someone still has to prompt the Claudes, talk to customers, coordinate with other teams, and decide what to build next. Even with the claim that the Claude Code team ships 100% AI-generated code, that doesn't mean someone who doesn't understand software could just join and ship at the same quality. You still need to know what you're building and why. And as someone pointed out from Dario's podcast with Dwarkesh, "90% of code written by AI" and "90% less demand for software engineers" are not the same statement. (Not even close.)

The upsides are real though. Someone who has an idea can now build a working prototype without needing a full dev team. You can spin up a tool for your local network, automate something annoying at home, actually build that side project you've been thinking about for three years but never started. The barrier to creating software has genuinely dropped. And that's a good thing. Less dependency on expensive SaaS tools for simple problems? I'll take that any day.

But the downsides are just as real. Not all AI-generated code is good quality. Security implications are piling up. Bug rates are increasing (something that came up at the Pragmatic Summit recently, and something we saw firsthand in our AntStack project). And the biggest problem: if you don't understand the "how" and "why" behind what the AI generated, you're building on a foundation you can't maintain. The moment something breaks in a way the AI hasn't seen before, you're stuck. And things always break in ways nobody has seen before. (Always.)

SaaS is dead

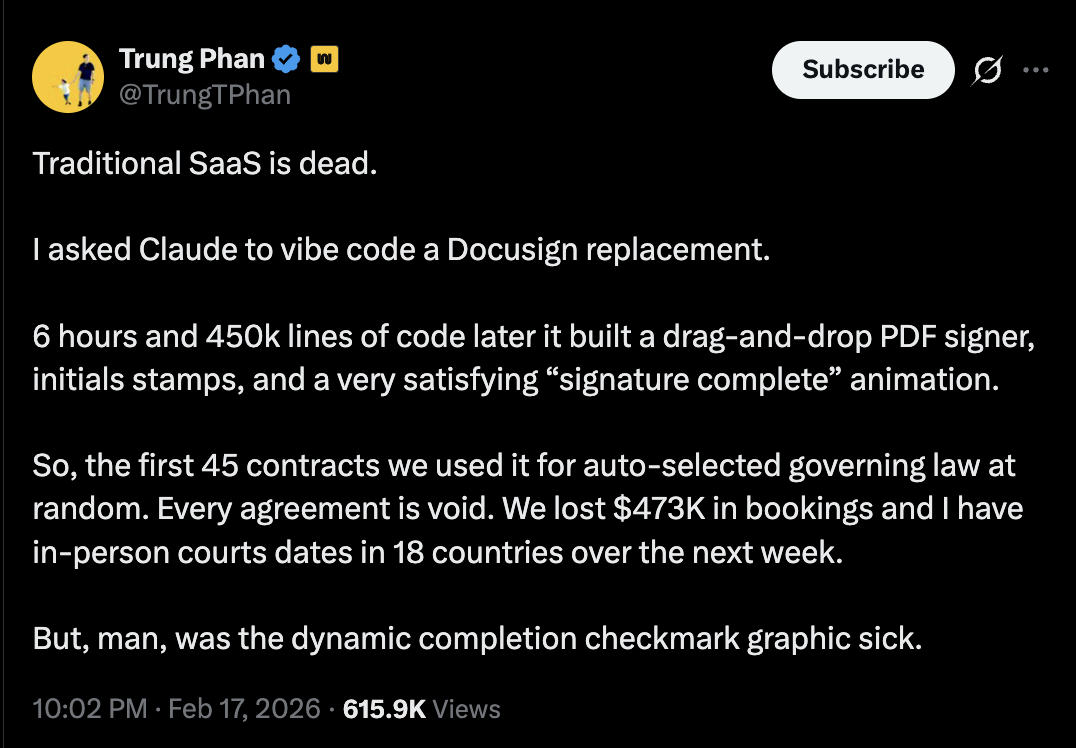

Speaking of building things yourself,

The gap between "it works on my machine" and "it works in production" is the gap that the entire "SaaS is dead" crowd pretends doesn't exist. Building a demo is easy now. Genuinely easy. But building something that handles edge cases, compliance requirements, audit trails, security vulnerabilities, and doesn't fall apart when a thousand people use it at the same time? That's a completely different problem. And it's the problem that SaaS companies actually solve.

Can AI help you build a tool that replaces some simple SaaS product you were overpaying for? Absolutely. But the moment you're dealing with user data, authentication, payment flows, or anything that touches security and compliance, vibe-coded replacements stop being clever and start being dangerous.

Hollywood is over

This one is interesting because the progress is genuinely impressive. AI-generated video and creative content have gotten dramatically better in a short time.

That's cool. Really cool. But here's the part nobody wants to talk about. "Slop" was Merriam-Webster's 2025 Word of the Year. A dictionary-official word for the flood of low-quality AI content drowning the internet. Creators and content makers are watching their work get buried under a tidal wave of AI-generated noise. The fact that we needed a new word for this tells you how bad it's gotten.

And then there's the disrespect. When OpenAI dropped GPT-4o, millions "Ghibli-fied" their selfies. Hayao Miyazaki, the man whose art was being strip-mined, had literally called AI animation "an insult to life itself.". That's not a creative tool. That's strip-mining someone's life's work. A Stanford study found that when an art marketplace allowed AI images, human artists dropped by 23%. These aren't abstract arguments.

AI will be a tool in the creative toolbox, the way CGI was before it. But the "Hollywood is over" take has the same energy as "SaaS is dead." It confuses making the easy part easier with solving the hard part. And in the meantime, the slop is making the internet worse for everyone.

Coming back to the question

So, will AI take my job?

I think about it this way. Just because autopilot exists doesn't mean anybody would be able to fly a plane. Skills and experience still matter.

The tools are getting better. What you can build alone is expanding. The way we write code is changing fast. All of that is true and all of that is exciting. But the people who actually build and maintain things that work, who understand the problems behind the code, who make the judgment calls that no AI can make for them? Those people aren't going anywhere.

So no, I'm not updating my resume to "professional prompt typer" just yet.

PS: Even this blog post is AI-generated. But not in the "Claude, write this blog post for me" way. I built a custom skill trained on my writing style, wrote the outline myself, researched every tweet and reference, dropped it all into a file, and fed that as context to generate the draft. Then a few rounds of corrections on top. Almost like the point I've been making this whole time.